The AI Skill Nobody's Practicing

On August 1, 2007, at 5:05 PM, 456 feet of the I-35W bridge in Minneapolis dropped into the Mississippi River. It was rush hour with 111 vehicles on the deck. Thirteen people died.

The bridge was 40 years old. It had been inspected every single year and every inspection produced a report, all of which were signed.

The National Transportation Safety Board (NTSB) found that the steel plates holding the bridge together were half the thickness they should have been. A design error from 1967... forty freakin years earlier. The bridge had been slowly failing for decades while inspectors noted the corrosion, filed their reports, and signed their names at the bottom.

17 years before it fell, the bridge was flagged “structurally deficient.”

And yet, nobody stopped driving across it.

The inspectors weren’t lazy. They weren’t lying. They did exactly what the process asked.

They looked and they noted.

They filed and they signed.

The process just never included the step where someone says, “This needs to close.”

So the inspection became paperwork. The signature became a rubber stamp and the gap between “inspected” and “safe” grew one year at a time, until it was 456 feet wide and full of cars.

That was the non-obvious, obvious mistake. The checking never stopped. It just stopped meaning anything. And nobody could tell the difference from the outside until the bridge was in the river.

---

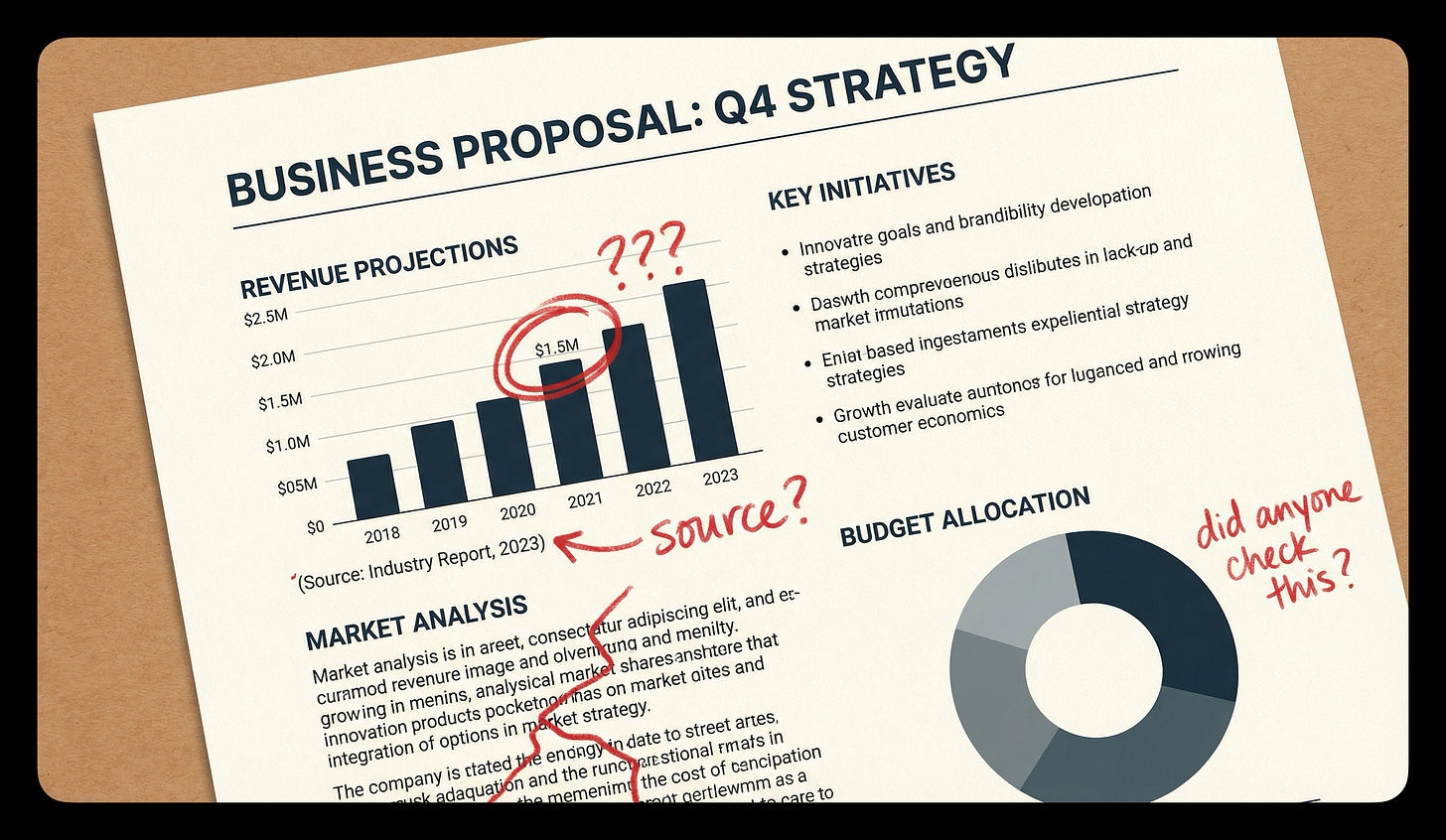

Now, think about the last time you reviewed something AI wrote for you. The email. The proposal. The slide deck.

How long did you look?

I know, I know... nobody dies when you paste an AI-generated paragraph into a slide deck. But the same human behavior is happening. It’s become so easy to copy 142 words into a PowerPoint that you end up on autopilot, slide after slide.

When that happens, your judgment and your taste take a backseat. The gap between “good enough” and “quality” gets a little wider every time, and you start to think nobody can tell the difference.

But Zapier can.

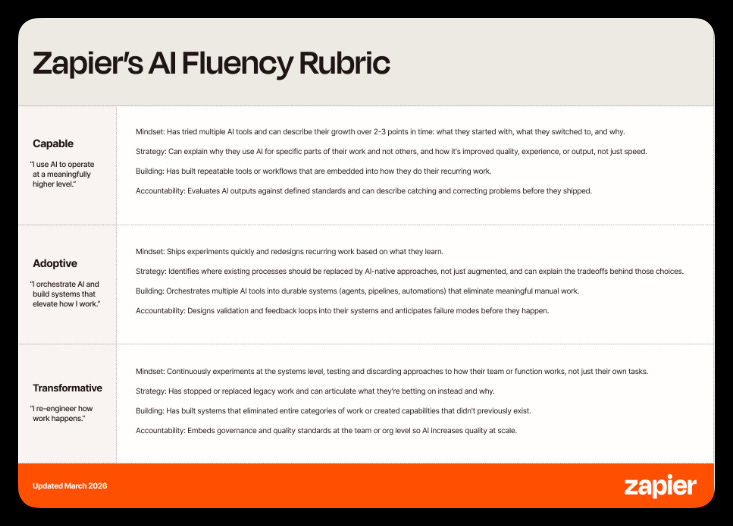

They just updated their AI fluency hiring rubric. They’ve been screening every candidate on AI skills for a year now. 100% adoption internally. The original rubric measured three things... mindset, strategy, and building.

They just added a fourth. Accountability.

Their Global Head of Talent put it plainly.

“With AI, you can delegate the work, but not the accountability.”

They’re now screening every hire for four specific behaviors.

Define what good looks like before you start

Evaluate outputs critically

Catch what’s wrong before it ships

Own the outcomes

What Erodes First

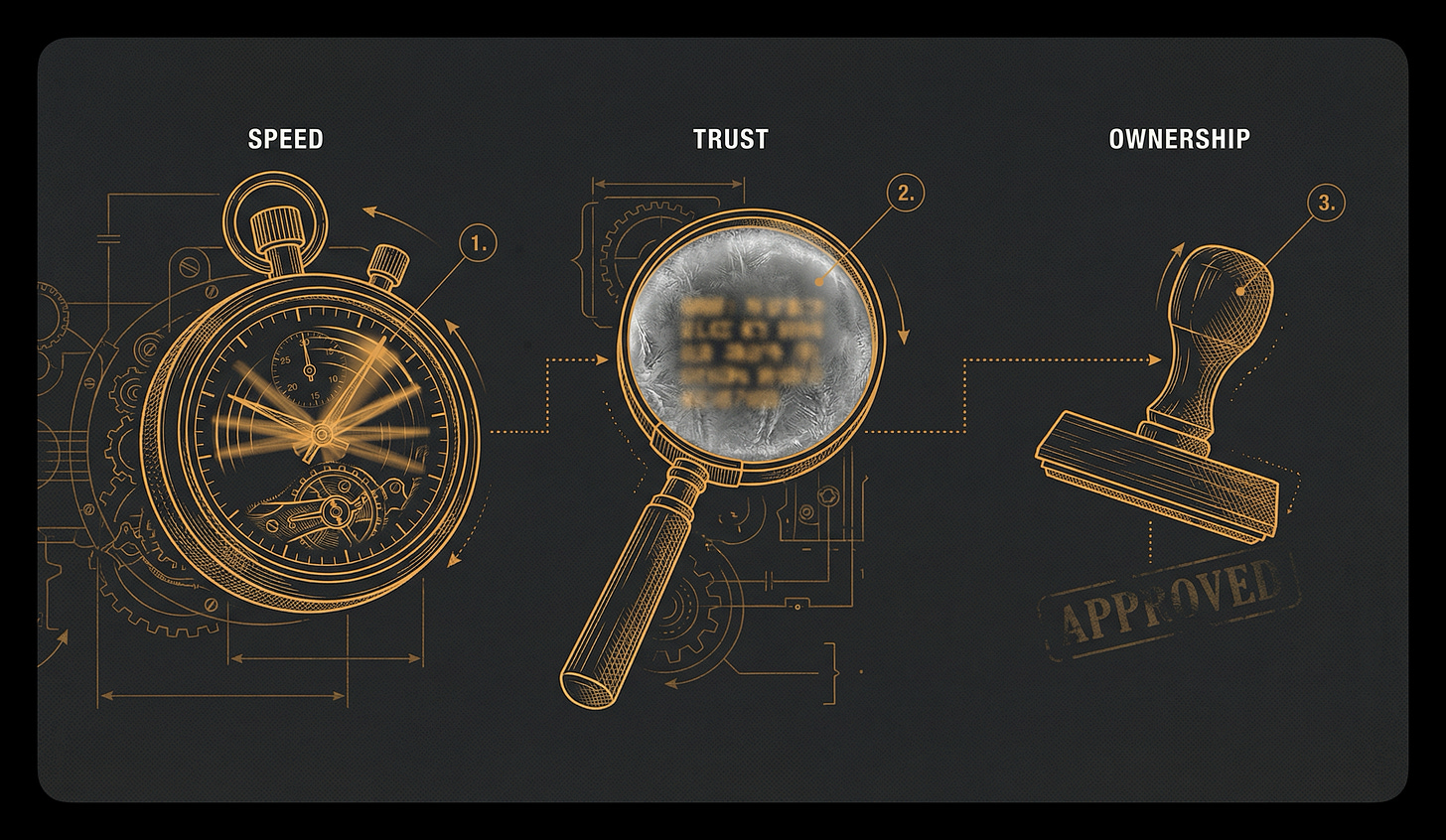

It starts with speed.

AI generates a draft in 8 seconds, and without realizing it, you compress your review to match. What used to be ten minutes with a pen in hand becomes ten seconds with your thumb hovering over send. You’re still reviewing, technically, but you’ve squeezed it down to the point where it’s creating the illusion of safety.

The cost is that your review becomes a glance. A glance catches a typo, but it doesn’t catch a wrong assumption, a misattributed claim, or a confidently stated number that’s off by 40%.

Then something sneakier happens.

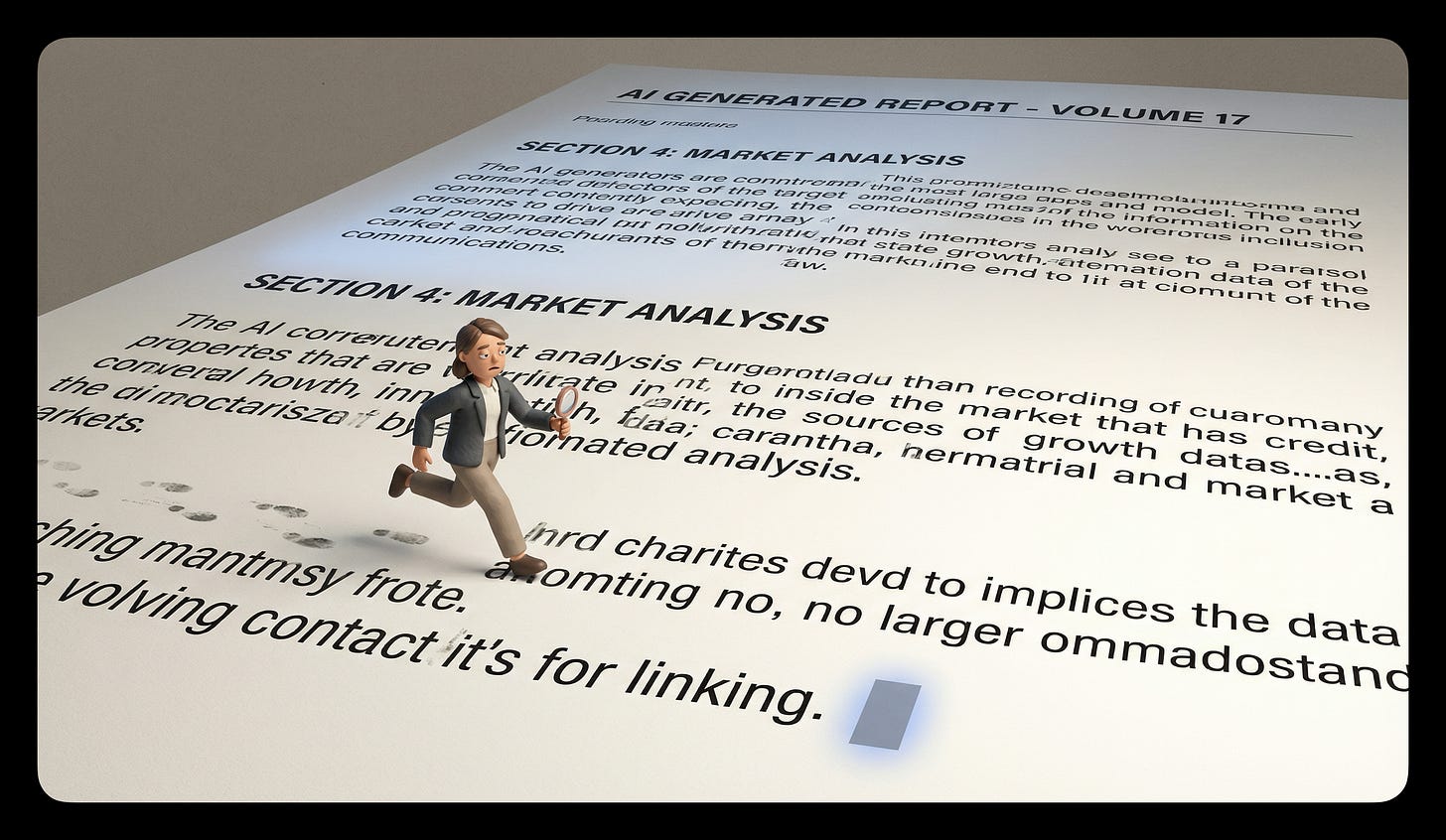

The output starts to look right. Every time. Clean formatting, confident tone, citations that sound real. The surface quality is so consistently polished that your brain starts using the surface as a proxy for the substance.

The horror stories are already piling up. In 2025, a California attorney named Amir Mostafavi used ChatGPT to write an appellate brief. He reviewed it. Filed it. Signed his name to it. Twenty-one of the twenty-three quotes in that brief were completely fabricated. The court fined him $10,000. He didn’t skip the review. He just didn’t verify anything, because the brief looked like a brief. Clean formatting, confident citations, paragraphs that read like someone who knew what they were talking about wrote them.

Raja Parasuraman spent two decades studying this at George Mason University. His research on automation complacency showed that the more reliable an automated system is, the worse humans become at detecting its errors. The monitoring itself stops working. The pattern of “it’s always been right” quietly rewires how hard you look, and the better the tool gets, the worse you get at catching it.

You start to trust it because it’s earned your trust.

The cost is that “looks right” quietly replaced “is right,” and you didn’t notice the swap.

And then comes the part that actually matters. You stop feeling responsible for the output.

“The AI wrote it” becomes an escape hatch you’d never say out loud, but you feel it. A thin pane of glass between you and the thing you just sent. When it works, you take credit. When it’s wrong, there’s a half-second where your brain reaches for the tool instead of yourself.

This can feels like delegation. You’ve finally scaled yourself.

But like the other examples, the bill always comes at the end. The cost is that when the output is wrong (and it will be wrong), you can’t explain why. You can’t walk someone through your reasoning or point to the judgment call that led to the error, because there was no judgment call. There was a blinking cursor and a send button.

The bridge inspectors signed every report right up until the day it fell.

Why This Is Worse Now

A year ago, the default failure mode with AI was bad output. Hallucinations and obvious errors. The tool was unreliable enough that you had to check.

But then those stinkin’ tools got better. The output got cleaner. And the checking got softer.

Anthropic’s AI Fluency Index found that the strongest users aren’t the ones who reach for the most tools. They’re the ones who push back and iterate. The signal is in the friction.

But the default pulls the other way. Every interface update makes it faster to accept and harder to pause. One-click insertions. Auto-applied suggestions. Inline completions that fill your sentence before you finish the thought.

The tools are designing for speed. But who is designing for judgment?

---

One Question

Before you send the next thing AI wrote for you... email, proposal, code, slide, message... ask yourself one thing.

Could I explain why this is right?

Not “does this look right.” Not “did I read it.” Could you sit across from someone, point to any sentence, and explain why it’s right? Could you defend the number? Could you catch the error?

If the answer is no, you didn’t review it. You rubber-stamped it. The form is signed. and the bridge is still standing. For now.

Try it for one week. You’ll be surprised how often the honest answer is “I have no idea if this is right. I just know it looks right.”

That’s the gap. Inspected vs. safe. You can feel it the moment you name it.

---

You’re Scanning This Right Now

Your brain is doing it right now. You’re three paragraphs from the end and you’ve already filed this under “accountability, got it, moving on.”

But you haven’t done the thing. You haven’t thought about the specific last thing you sent. Not a general “yeah, I should be more careful.” The specific email, proposal, or message where you hit send without understanding what you were approving.

You know which one it is.

The inspectors on the I-35W bridge weren’t careless people. They were experienced professionals doing their job exactly as the system asked. The system just never included the step where someone says stop.

Nobody is going to redesign your send button to include a judgment check. That one’s yours.

---

The invisible assumptions running underneath the way you work. That’s what Beware the Default is about. If that’s your kind of room, we’d like to have you.

---

Sources

- National Transportation Safety Board (2008). *Collapse of I-35W Highway Bridge, Minneapolis, Minnesota, August 1, 2007.* Highway Accident Report NTSB/HAR-08/03.

- Parasuraman, R. & Manzey, D.H. (2010). “Complacency and Bias in Human Use of Automation: An Attentional Integration.” *Human Factors*, 52(3), 381-410.

- Anthropic (2025). *AI Fluency Index.*

- Zapier (2026). “One Year Later: Raising the AI Fluency Bar for Every Zapier Hire.”

Great read. The attorney filing a brief with 21 fabricated quotes and not catching it because it looked like a brief. That's terrifying. The surface was so polished that his brain skipped verification entirely. Jeez. I write about how defaults in markets and institutions shape decisions before anyone consciously chooses. You're describing the same thing happening in real time with AI tooling. The system keeps reporting health while the judgment underneath thins out. Thanks for this one.

I’m spending about 8 hours a day building mods for claude that do that. So, like 2 hours ago.